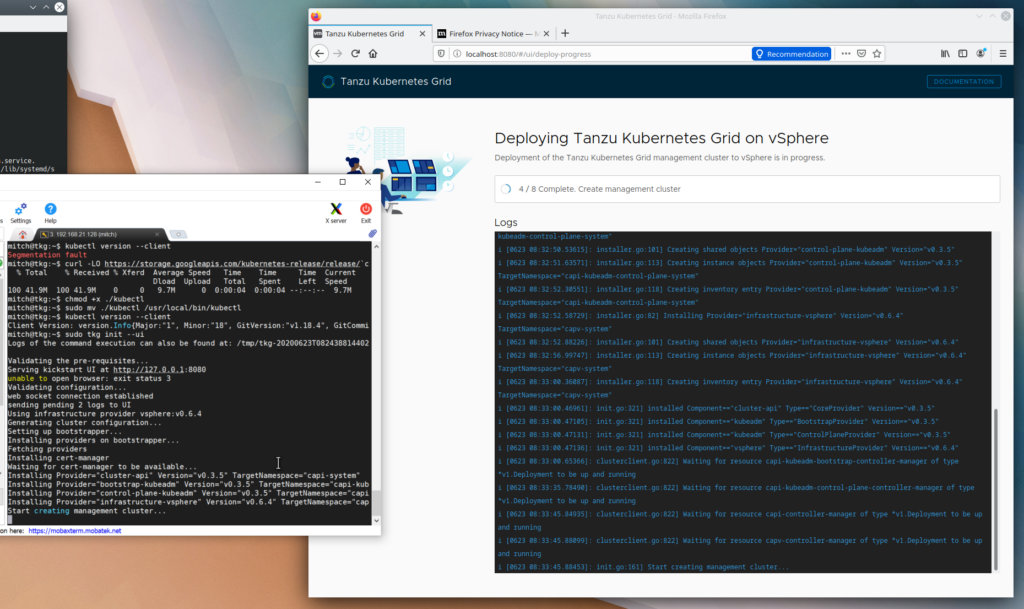

This is a follow on from my last post, Deploying VMware Tanzu Kubernetes Grid on vSphere.

So I wrote about deploying TKG from Windows mostly as an experiment, I hadn’t seen too many others writing about it online. While it worked fine and allowed me to deploy the management cluster with no issues, I ended up reverting to using a Linux VM to perform the work because it was a bit clunky to keep Docker installed and running on my main machine.

I’ve redeployed from scratch, mostly as a bit of practice. But now I want to actually get a workload up and running.

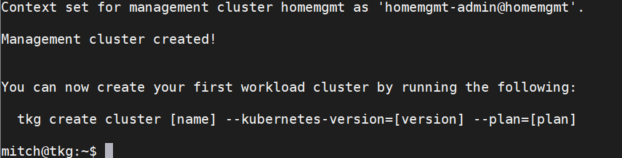

Once you’ve deployed your management cluster, TKG helpfully gives you the command to create a workload cluster.

You have the option of creating a dev cluster, which deploys with one control plane and one control node. A prod plan deploys with three control plane nodes and one worker node. You’ve always got the option to change the number of nodes after deployment by running the tkg scale cluster command.

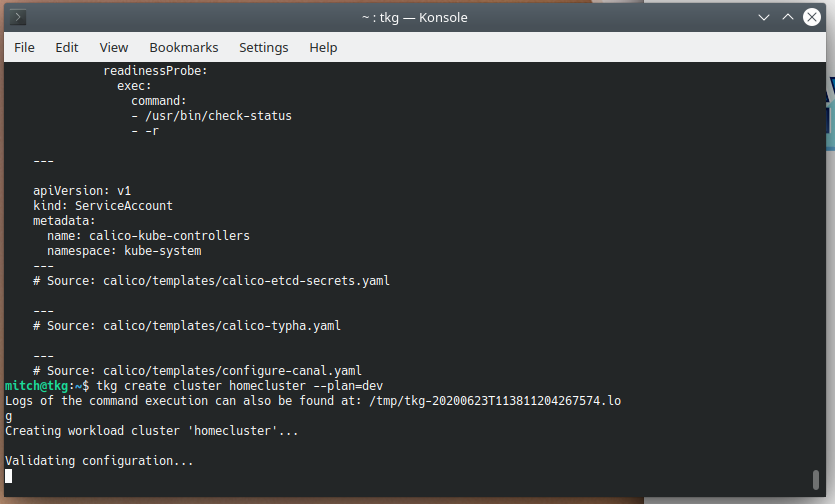

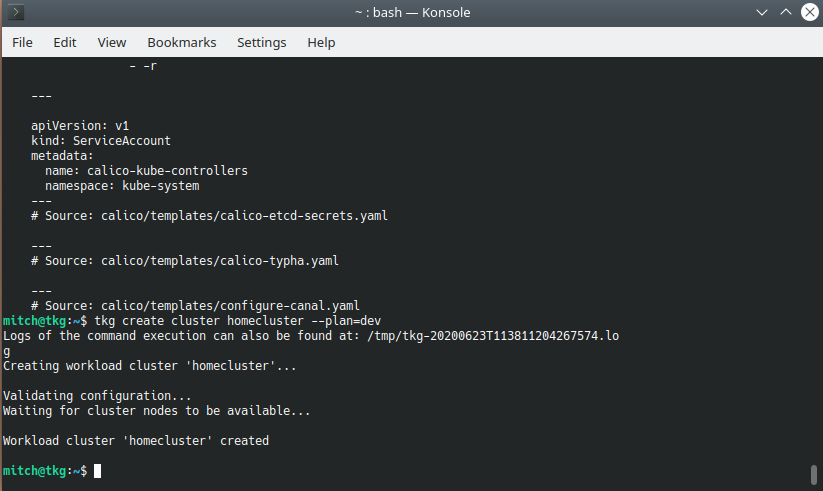

I’ve got limited memory in my current lab environment, so i’ll deploy a dev cluster. I’ll do a dry run first, which previews the YAML that TKG will use to create the cluster. This is handy to see what’s going on in the background, and can be saved for re-use later.

tkg create cluster homecluster --plan=dev --dry-runLooks good! So now I want to create the cluster for real, so I simply omit –dry-run

tkg create cluster homecluster --plan=dev

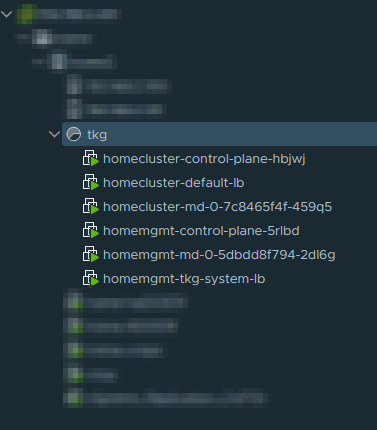

A few minutes later, you should have 3 extra VMs deployed – easy as that!

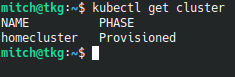

We can also quickly confirm the cluster is up and running with kubectl get cluster

Now that we’ve got a running cluster, I’m going to deploy Yelb. This allows me to quickly fire up Kubernetes and get an app running.

Deploy Yelb

kubectl create ns yelb

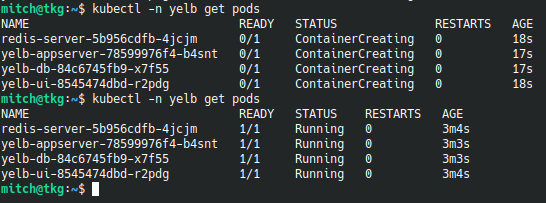

kubectl apply -f https://raw.githubusercontent.com/lamw/vmware-k8s-app-demo/master/yelb.yamlVerify yelb is creating

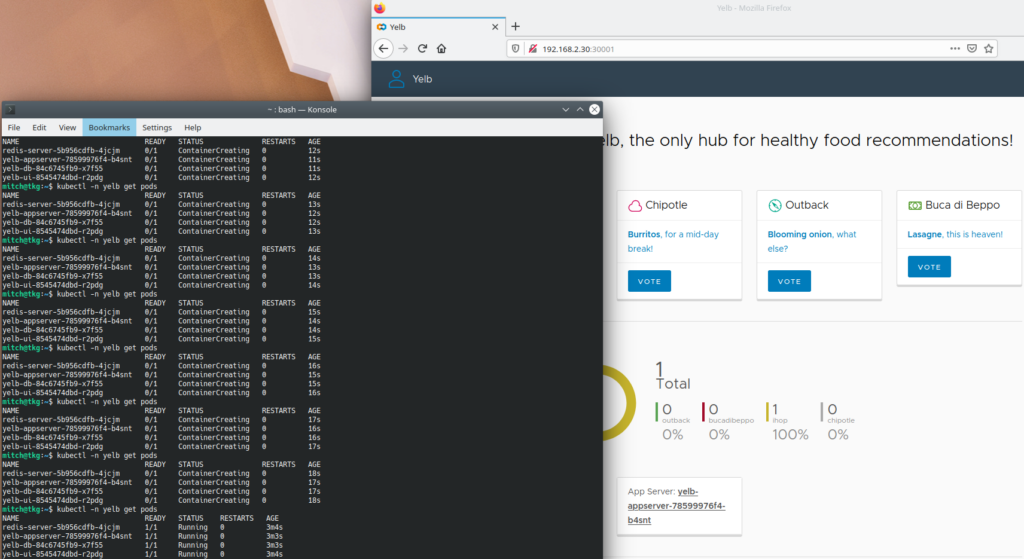

kubectl -n yelb get podsIt should say status ContainerCreating, then eventually Running

Point your browser to http://IP:30001, and you should see the Yelb interface! How easy was that?

Next up – managing Kubernetes clusters using Tanzu Mission Control